External Location Unity Catalog

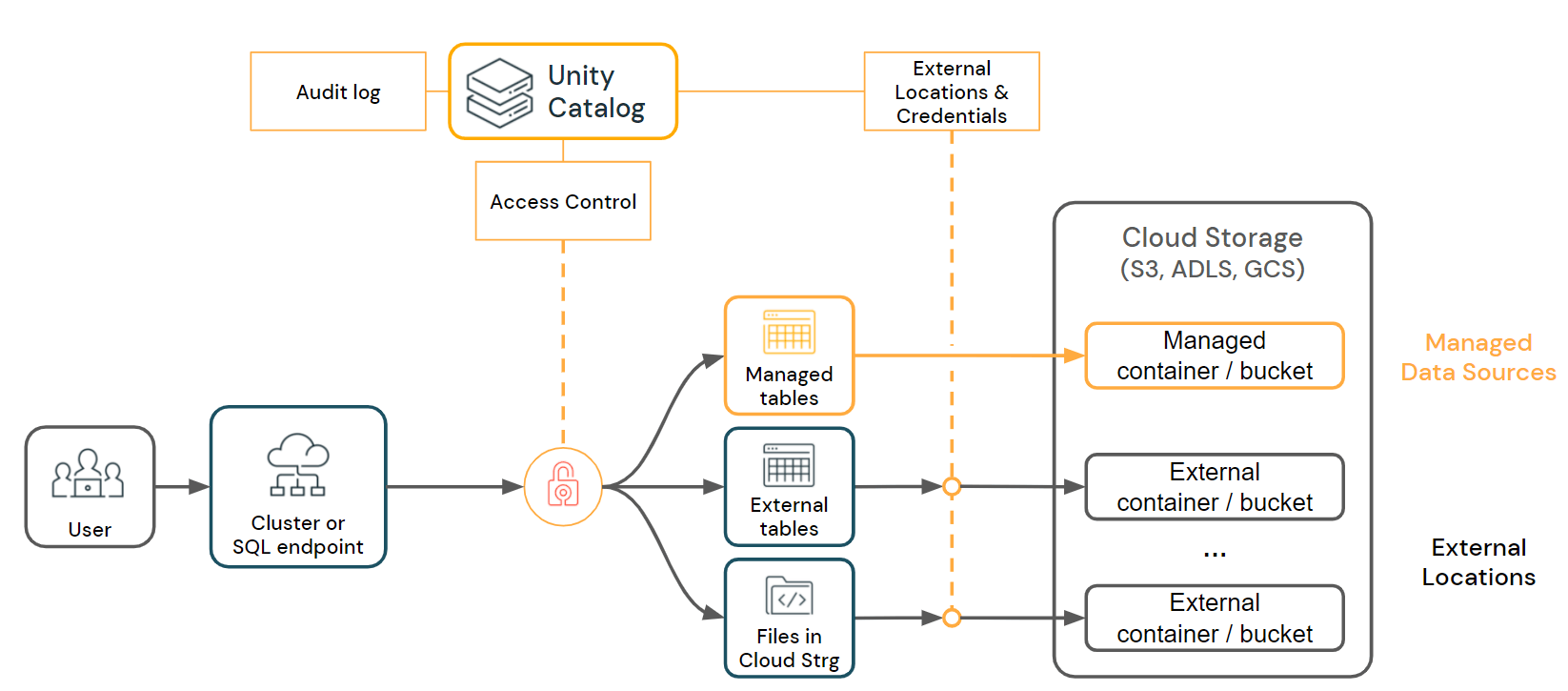

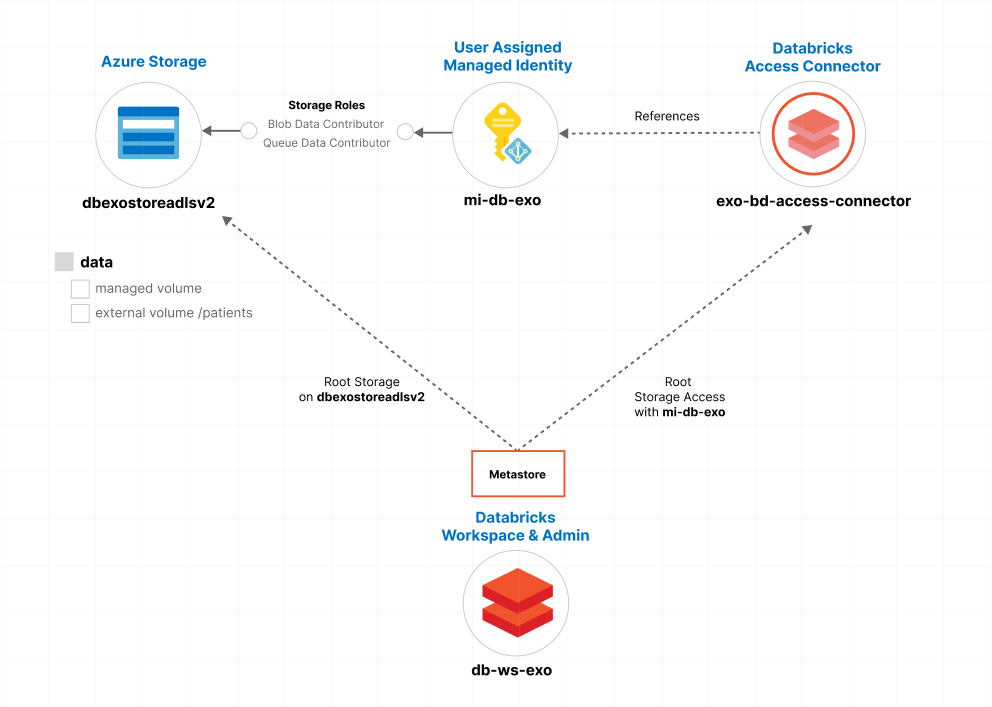

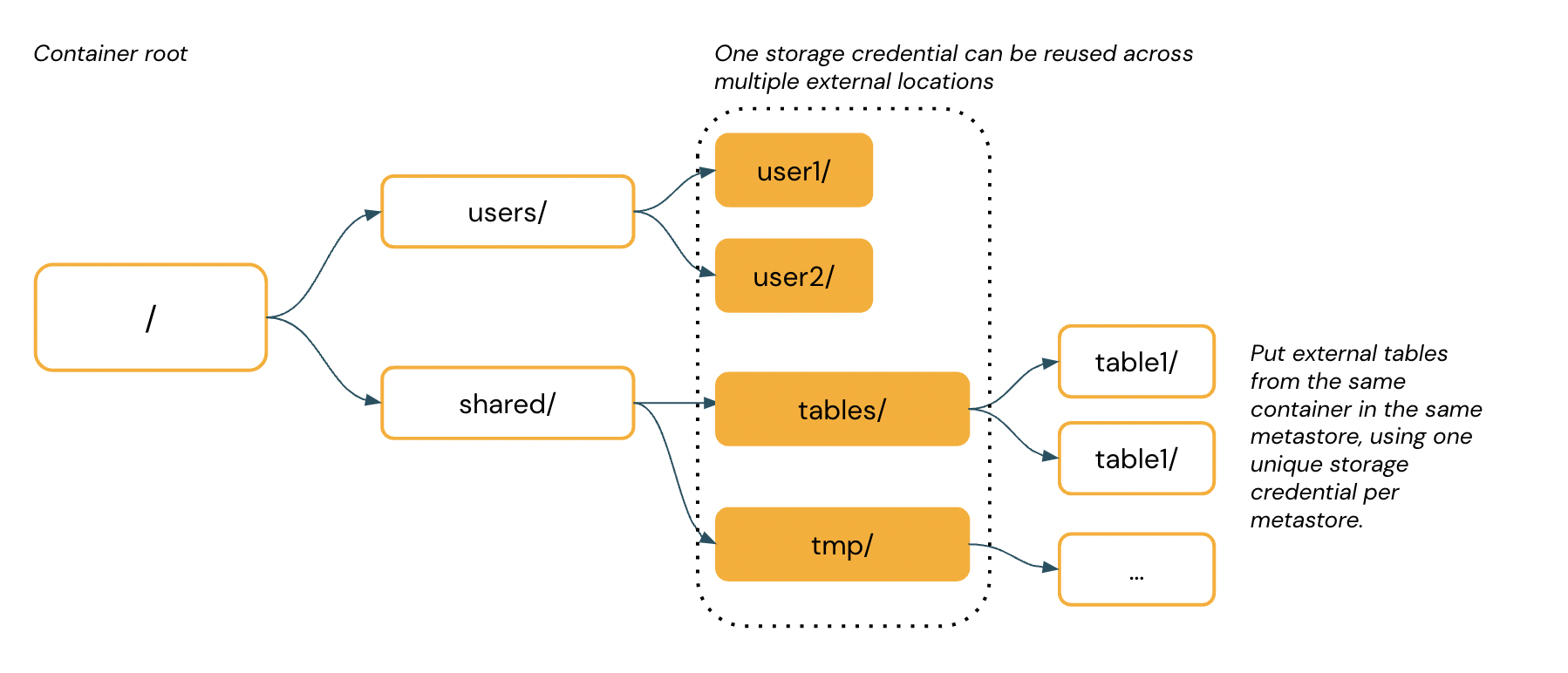

External Location Unity Catalog - You can create an external location using catalog explorer, the databricks cli, sql commands in a notebook or databricks sql query,. An external location is an object. For managed tables, unity catalog fully manages the lifecycle and file layout. Unity catalog governs data access permissions for external data for all queries that go through unity catalog but does not manage data lifecycle, optimizations, storage. Although databricks recommends against storing. Copy data from managed tables to datalake by creating a dataframe and writing the. Learn about unity catalog external locations in databricks sql and databricks runtime. Unity catalog governs access to cloud storage using a securable object called an external location, which defines a path to a cloud storage location and the credentials required. This article shows how to configure an external location in unity catalog to govern access to your dbfs root storage location. Databricks recommends governing file access using volumes. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. Use metastore admin rights to change ownership to yourself or the required user, and then manage. Unity catalog lets you create managed tables and external tables. Databricks recommends governing file access using volumes. An external location is an object. Before setting the container as an external location, we need to grant the storage blob data contributor role to the connector on the storage, as shown in the screenshot below. Although databricks recommends against storing. Copy data from managed tables to datalake by creating a dataframe and writing the. This article describes how to list, view, update, grant permissions on, and delete external locations. Databricks recommends governing file access using volumes. Databricks recommends governing file access using volumes. Although databricks recommends against storing. Although databricks recommends against storing. This article describes how to configure an external location in unity catalog to connect cloud storage to databricks. This article describes how to list, view, update, grant permissions on, and delete external locations. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. Although databricks recommends against storing. The workspace catalog is made up of three unity catalog securables: To manage access to the underlying cloud storage that holds tables and volumes, unity catalog uses a securable. An external location is an object. Databricks recommends governing file access using volumes. To create external locations, you must be a metastore admin or a user with the create_external_location privilege. To move the existing managed table to the external table, perform the following steps: This article shows how to configure an external location in unity catalog to govern access to. External locations associate unity catalog storage. Unity catalog governs access to cloud storage using a securable object called an external location, which defines a path to a cloud storage location and the credentials required. You can create an external location using catalog explorer, the databricks cli, sql commands in a notebook or databricks sql query,. This article describes how to. An external location is an object. Although databricks recommends against storing. This article describes how to list, view, update, grant permissions on, and delete external locations. You are unable to view, delete, or drop an external location in the ui or. You can create an external location using catalog explorer, the databricks cli, sql commands in a notebook or databricks. This article shows how to configure an external location in unity catalog to govern access to your dbfs root storage location. You can create an external location that references storage in an azure data lake storage storage container or cloudflare r2 bucket. Use metastore admin rights to change ownership to yourself or the required user, and then manage. Databricks recommends. Databricks recommends governing file access using volumes. Unity catalog governs data access permissions for external data for all queries that go through unity catalog but does not manage data lifecycle, optimizations, storage. This article shows how to configure an external location in unity catalog to govern access to your dbfs root storage location. To manage access to the underlying cloud. The workspace catalog is made up of three unity catalog securables: For managed tables, unity catalog fully manages the lifecycle and file layout. To create external locations, you must be a metastore admin or a user with the create_external_location privilege. External locations associate unity catalog storage. This article shows how to configure an external location in unity catalog to govern. This article describes how to list, view, update, grant permissions on, and delete external locations. This article describes how to list, view, update, grant permissions on, and delete external locations. You can create an external location that references storage in an azure data lake storage storage container or cloudflare r2 bucket. To move the existing managed table to the external. To create external locations, you must be a metastore admin or a user with the create_external_location privilege. Although databricks recommends against storing. This article describes how to list, view, update, grant permissions on, and delete external locations. Unity catalog governs access to cloud storage using a securable object called an external location, which defines a path to a cloud storage. Although databricks recommends against storing. The workspace catalog is made up of three unity catalog securables: To manage access to the underlying cloud storage that holds tables and volumes, unity catalog uses a securable object called an external location, which defines a path to a. You can create an external location using catalog explorer, the databricks cli, sql commands in a notebook or databricks sql query,. You are unable to view, delete, or drop an external location in the ui or. This article shows how to configure an external location in unity catalog to govern access to your dbfs root storage location. To create external locations, you must be a metastore admin or a user with the create_external_location privilege. An external location is an object. Before setting the container as an external location, we need to grant the storage blob data contributor role to the connector on the storage, as shown in the screenshot below. This article describes how to list, view, update, grant permissions on, and delete external locations. Copy data from managed tables to datalake by creating a dataframe and writing the. Unity catalog lets you create managed tables and external tables. This article describes how to configure an external location in unity catalog to connect cloud storage to databricks. This article describes how to list, view, update, grant permissions on, and delete external locations. Databricks recommends governing file access using volumes. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location.azure Unity Catalog Access External location with a Service

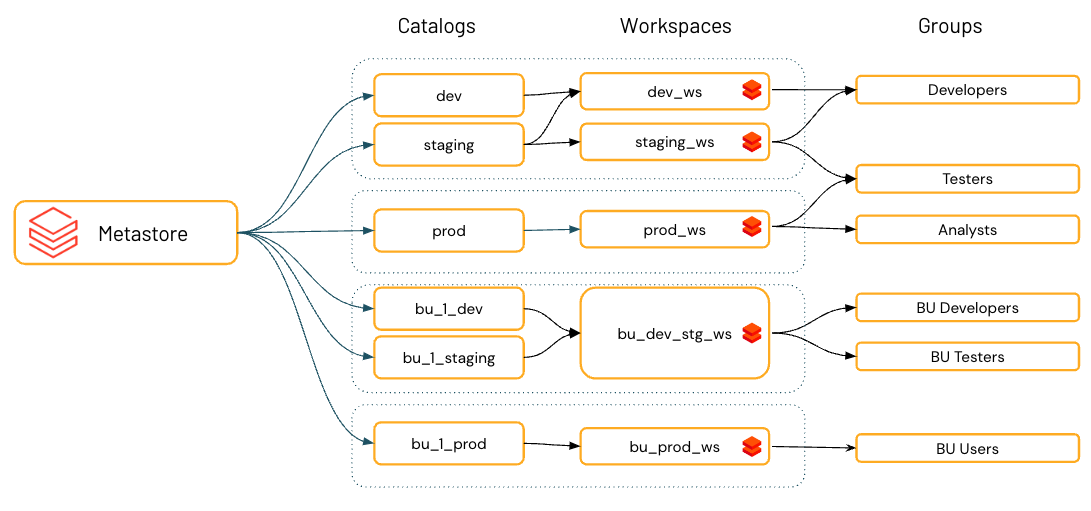

Unity Catalog best practices Azure Databricks Microsoft Learn

An Ultimate Guide to Databricks Unity Catalog — Advancing Analytics

An Ultimate Guide to Databricks Unity Catalog

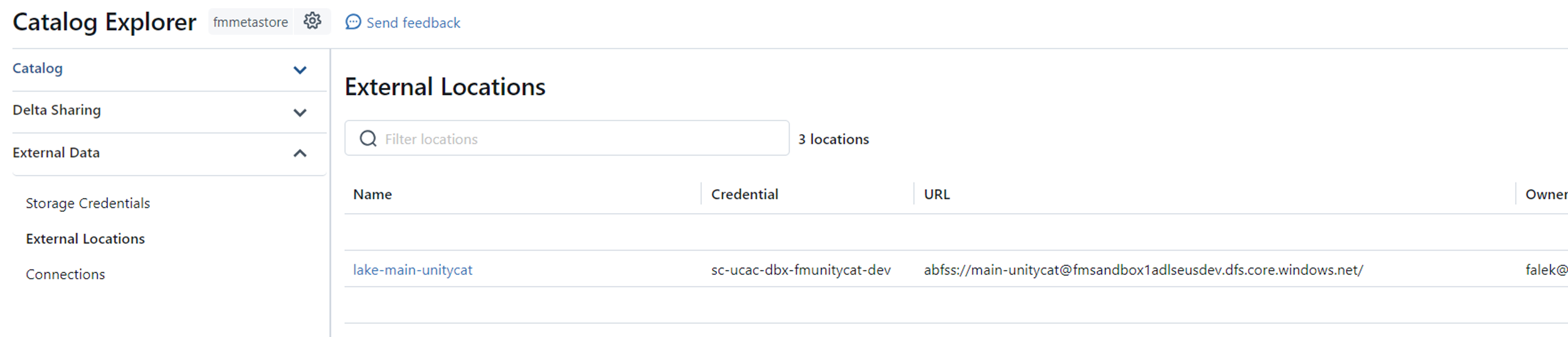

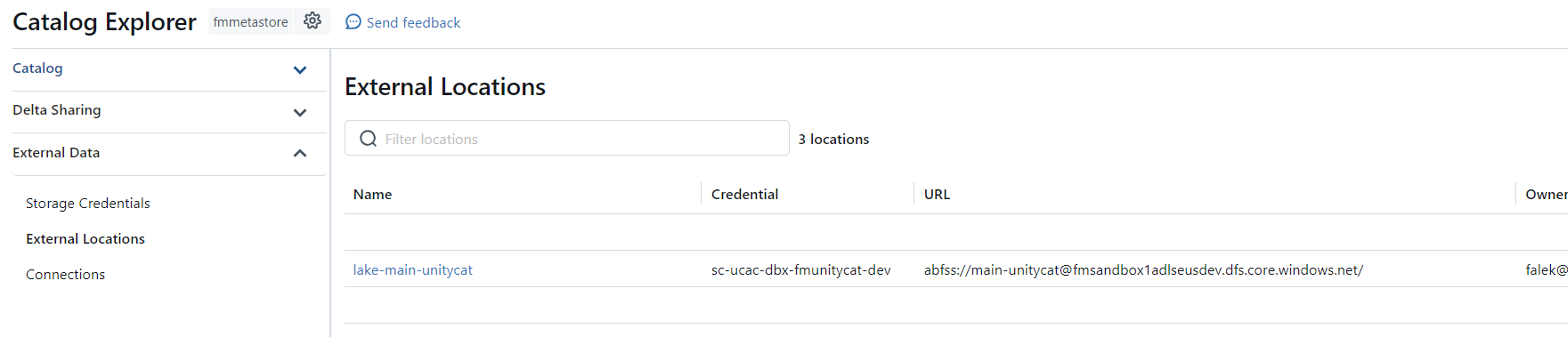

Creating Storage Credentials and External Locations in Databricks Unity

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

How to Create Unity Catalog Volumes in Azure Databricks

Unity Catalog best practices Azure Databricks Microsoft Learn

Databricks Unity Catalog part3 How to create storage credentials and

12 Schemas with External Location in Unity Catalog Managed Table data

Learn About Unity Catalog External Locations In Databricks Sql And Databricks Runtime.

Unity Catalog Governs Data Access Permissions For External Data For All Queries That Go Through Unity Catalog But Does Not Manage Data Lifecycle, Optimizations, Storage.

Use Metastore Admin Rights To Change Ownership To Yourself Or The Required User, And Then Manage.

Although Databricks Recommends Against Storing.

Related Post: